Note: Amazon Redshift selects a join operator based on the distribution style of the table and location of the data required. To optimize the query performance, the sort key and distribution key have been changed to 'eventid' for both tables. In the following example, the merge join is being used instead of a hash join. Get reddit premium. U/EarthFinancial85 follow unfollow. Created by EarthFinancial85 a. Skyforge 1.0.2.26. Skyfall (2012) 720p BluRay YTS MX. And join one of thousands of communities. ×. Skillshare - Cinema 4D and redshift Complex plane and trail (self.EarthFinancial85) submitted 1 minute ago by EarthFinancial85. Download Redshift Premium - Astronomy 1.0.2 macOS or any other file from Applications category. HTTP download also available at fast speeds. Download Kolor Neutralhazer 1.0.2 (mac & win) CRACKED. Download Redshift Version 1.2.42 Full - cracked. Download PVsyst Version 6.34 Premium FULL Cracked.

Small correction: figure 4.1 (page 52) describes numbers:0.1, 0.2 and 0.3 as a redshift. This is not exactly. These numbers are related to the redshift but they represent fraction of a time since a big bang.

Redshift Premium – Astronomy 1.0.2

Explore space with the multiple award-winning professional planetarium software, Redshift.

View the night sky in unparalleled clarity, travel right through our galaxy and beyond, and look at planets, moons, asteroids, nebulae and other celestial bodies from close range. Explore the night sky from any desired viewpoint on the Earth or from moons and planets, travel into the past or into the future and discover first hand everything about stars, planets and other celestial bodies – Redshift introduces you to endless possibilities on your excursion through space.

Keep an eye on important celestial events with the help of the celestial calendar and experience these events simultaneously from different perspectives. Control your telescope with the help of Redshift and plan your sky observations using the individually customizable observation planner.

Features:

- Comprehensive Planetarium for realistic real-time calculation of celestial bodies of our galaxy in accordance with the latest research results

- Over 2.5 million stars, 1 million fascinating Deep Sky objects, 700,000 small planets, 2,000 known comets, all planets, dwarf planets and moons, as well as stars with exoplanets and known novae/supernovae

- Impressive 3D-displays from more than 100 celestial objects

- 3D-flights between and around planets, their moons, stars, Deep Sky objects, galaxies and the Milky Way

- Land on planets and moons, as well as getting a view of space from their surfaces

- Display of the surface characteristics of the Earth’s moon and planets

- Extensive astronomical data for each celestial object

- Comprehensive lexicon of astronomy

- 25 interactive guided tours with informative pictures, videos and animations

- Solar eclipse maps, as well as maps of satellite passes

- Sky calendar and comprehensive, customizable observation planner

- Telescope controls for all Meade and Celestron telescopes (with the exception of the currently not publicly released telescope control “Celestron NexStar Evolution”)

- Simultaneous display of a celestial event from different perspectives in the Multi-Window mode

- “On the fly” download of the current Gaia Super-catalogue with one billion stars

- State-of-the-art orbit data for satellites, comets and asteroids, as well as the addition of new discoveries through the Live-Update function

- Editing tool for the manual amendment of the databank

- Extensive time interval of 15,000 years (4713 BC to 9999 AD)

- Numerous panoramas and sound backgrounds, as well as Day/Night mode

- Exact numerical integration of the movement of asteroids and comets

- Five base coordinate systems

- Available in the English, French, German and Russian languages

What's New:

Version 1.0.2- Some smaller tweaks and optimizations make the app even better!

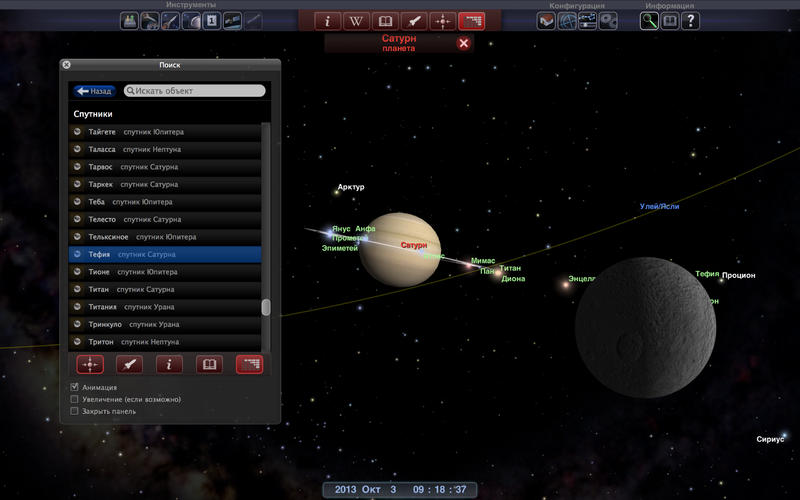

Screenshots:

- Title: Redshift Premium – Astronomy 1.0.2

- Developer: United Soft Media Verlag GmbH

- Compatibility: OS X 10.10 or later, 64-bit processor

- Language: English, French, German, Russian

- Includes: K'ed by TNT

- Size: 1.25 GB

- View in Mac App Store

NitroFlare:

Released:

Singer.io target for loading data to Amazon Redshift - PipelineWise compatible

Project description

Singer target that loads data into Amazon Redshift following the Singer spec.

This is a PipelineWise compatible target connector.

How to use it

The recommended method of running this target is to use it from PipelineWise. When running it from PipelineWise you don't need to configure this tap with JSON files and most of things are automated. Please check the related documentation at Target Redshift

If you want to run this Singer Target independently please read further.

Install

First, make sure Python 3 is installed on your system or follow theseinstallation instructions for Mac orUbuntu.

Redshift Premium 1.0.2 Apk

It's recommended to use a virtualenv:

or

To run

Redshift Premium 1.0.2 Server

Like any other target that's following the singer specificiation:

some-singer-tap | target-redshift --config [config.json]

It's reading incoming messages from STDIN and using the properites in config.json to upload data into Amazon Redshift.

Redshift Premium 1.0.2 Pro

Note: To avoid version conflicts run tap and targets in separate virtual environments.

Configuration settings

Running the the target connector requires a config.json file. Example with the minimal settings:

Full list of options in config.json:

| Property | Type | Required? | Description |

|---|---|---|---|

| host | String | Yes | Redshift Host |

| port | Integer | Yes | Redshift Port |

| user | String | Yes | Redshift User |

| password | String | Yes | Redshift Password |

| dbname | String | Yes | Redshift Database name |

| aws_profile | String | No | AWS profile name for profile based authentication. If not provided, AWS_PROFILE environment variable will be used. |

| aws_access_key_id | String | No | S3 Access Key Id. Used for S3 and Redshfit copy operations. If not provided, AWS_ACCESS_KEY_ID environment variable will be used. |

| aws_secret_access_key | String | No | S3 Secret Access Key. Used for S3 and Redshfit copy operations. If not provided, AWS_SECRET_ACCESS_KEY environment variable will be used. |

| aws_session_token | String | No | S3 AWS STS token for temporary credentials. If not provided, AWS_SESSION_TOKEN environment variable will be used. |

| aws_redshift_copy_role_arn | String | No | AWS Role ARN to be used for the Redshift COPY operation. Used instead of the given AWS keys for the COPY operation if provided - the keys are still used for other S3 operations |

| s3_acl | String | No | S3 Object ACL |

| s3_bucket | String | Yes | S3 Bucket name |

| s3_key_prefix | String | (Default: None) A static prefix before the generated S3 key names. Using prefixes you can upload files into specific directories in the S3 bucket. | |

| copy_options | String | (Default: EMPTYASNULL BLANKSASNULL TRIMBLANKS TRUNCATECOLUMNS TIMEFORMAT 'auto' COMPUPDATE OFF STATUPDATE OFF). Parameters to use in the COPY command when loading data to Redshift. Some basic file formatting parameters are fixed values and not recommended overriding them by custom values. They are like: CSV GZIP DELIMITER ',' REMOVEQUOTES ESCAPE | |

| batch_size_rows | Integer | (Default: 100000) Maximum number of rows in each batch. At the end of each batch, the rows in the batch are loaded into Redshift. | |

| flush_all_streams | Boolean | (Default: False) Flush and load every stream into Redshift when one batch is full. Warning: This may trigger the COPY command to use files with low number of records, and may cause performance problems. | |

| parallelism | Integer | (Default: 0) The number of threads used to flush tables. 0 will create a thread for each stream, up to parallelism_max. -1 will create a thread for each CPU core. Any other positive number will create that number of threads, up to parallelism_max. | |

| max_parallelism | Integer | (Default: 16) Max number of parallel threads to use when flushing tables. | |

| default_target_schema | String | Name of the schema where the tables will be created. If schema_mapping is not defined then every stream sent by the tap is loaded into this schema. | |

| default_target_schema_select_permissions | String | Grant USAGE privilege on newly created schemas and grant SELECT privilege on newly created tables to a specific list of users or groups. Example: {'users': ['user_1','user_2'], 'groups': ['group_1', 'group_2']} If schema_mapping is not defined then every stream sent by the tap is granted accordingly. | |

| schema_mapping | Object | Useful if you want to load multiple streams from one tap to multiple Redshift schemas.If the tap sends the stream_id in <schema_name>-<table_name> format then this option overwrites the default_target_schema value. Note, that using schema_mapping you can overwrite the default_target_schema_select_permissions value to grant SELECT permissions to different groups per schemas or optionally you can create indices automatically for the replicated tables. Note: This is an experimental feature and recommended to use via PipelineWise YAML files that will generate the object mapping in the right JSON format. For further info check a [PipelineWise YAML Example] | |

| disable_table_cache | Boolean | (Default: False) By default the connector caches the available table structures in Redshift at startup. In this way it doesn't need to run additional queries when ingesting data to check if altering the target tables is required. With disable_table_cache option you can turn off this caching. You will always see the most recent table structures but will cause an extra query runtime. | |

| add_metadata_columns | Boolean | (Default: False) Metadata columns add extra row level information about data ingestions, (i.e. when was the row read in source, when was inserted or deleted in redshift etc.) Metadata columns are creating automatically by adding extra columns to the tables with a column prefix _SDC_. The metadata columns are documented at https://transferwise.github.io/pipelinewise/data_structure/sdc-columns.html. Enabling metadata columns will flag the deleted rows by setting the _SDC_DELETED_AT metadata column. Without the add_metadata_columns option the deleted rows from singer taps will not be recongisable in Redshift. | |

| hard_delete | Boolean | (Default: False) When hard_delete option is true then DELETE SQL commands will be performed in Redshift to delete rows in tables. It's achieved by continuously checking the _SDC_DELETED_AT metadata column sent by the singer tap. Due to deleting rows requires metadata columns, hard_delete option automatically enables the add_metadata_columns option as well. | |

| data_flattening_max_level | Integer | (Default: 0) Object type RECORD items from taps can be loaded into VARIANT columns as JSON (default) or we can flatten the schema by creating columns automatically.When value is 0 (default) then flattening functionality is turned off. | |

| primary_key_required | Boolean | (Default: True) Log based and Incremental replications on tables with no Primary Key cause duplicates when merging UPDATE events. When set to true, stop loading data if no Primary Key is defined. | |

| validate_records | Boolean | (Default: False) Validate every single record message to the corresponding JSON schema. This option is disabled by default and invalid RECORD messages will fail only at load time by Snowflake. Enabling this option will detect invalid records earlier but could cause performance degradation. | |

| skip_updates | Boolean | No | (Default: False) Do not update existing records when Primary Key is defined. Useful to improve performance when records are immutable, e.g. events |

| compression | String | No | The compression method to use when writing files to S3 and running Redshift COPY. The currently supported methods are gzip or bzip2. Defaults to none ('). |

| slices | Integer | No | The number of slices to split files into prior to running COPY on Redshift. This should be set to the number of Redshift slices. The number of slices per node depends on the node size of the cluster - run SELECT COUNT(DISTINCT slice) slices FROM stv_slices to calculate this. Defaults to 1. |

| temp_dir | String | (Default: platform-dependent) Directory of temporary CSV files with RECORD messages. |

To run tests:

- Install python dependencies in a virtual env:

- To run unit tests:

- To run integration tests define environment variables first:

To run pylint:

- Install python dependencies and run python linter

License

Apache License Version 2.0

See LICENSE to see the full text.

Release historyRelease notifications | RSS feed

1.6.0

1.5.0

1.4.1

1.4.0

1.3.0

1.2.1

1.2.0

Redshift Premium 1.0.2 Version

1.1.0

1.0.8

1.0.7

1.0.6

1.0.5

1.0.4

1.0.3

Redshift Premium 1.0.2 Pc

1.0.2

1.0.1

1.0.0

Redshift Premium 1.0.2 Free

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

| Filename, size | File type | Python version | Upload date | Hashes |

|---|---|---|---|---|

| Filename, size pipelinewise_target_redshift-1.6.0-py3-none-any.whl (23.1 kB) | File type Wheel | Python version py3 | Upload date | Hashes |

| Filename, size pipelinewise-target-redshift-1.6.0.tar.gz (18.4 kB) | File type Source | Python version None | Upload date | Hashes |

Hashes for pipelinewise_target_redshift-1.6.0-py3-none-any.whl

Redshift Premium 1.0.2 Download

| Algorithm | Hash digest |

|---|---|

| SHA256 | 9d0dad7f8a6a670e3b8a79eb594ec0930da0b497841d38051f6b6d402ee94d69 |

| MD5 | 82afb4c3e9cdcd7d706a28c7c7347763 |

| BLAKE2-256 | 0bdee9fe8d903608f7acf2e1eed261bee5d4ff1964fe6002e645db4d197cb95c |

Hashes for pipelinewise-target-redshift-1.6.0.tar.gz

| Algorithm | Hash digest |

|---|---|

| SHA256 | d73b8b95cb09c3fb33b23f45eba750daf40de242c86bbd438b85b8f811d01300 |

| MD5 | fc3b91279d7baca9432e695f6f34b4ae |

| BLAKE2-256 | 36a20b9ce51c94a2b85170a704f994b2cc51c2e138af255c98417e67f08b3ad0 |